AI-generated legal summaries: benefits, risks, and best practices

TL;DR:

- AI-generated legal summaries are complex, structured outputs that require verification and source linkage for legal safety. They excel at fast processing and standardized formatting but carry risks of omissions and fabrications, making human review essential. Proper workflows and source-linked tools are critical to ensure responsible, defensible legal decision-making with AI support.

Legal teams often assume that AI-generated legal summaries are a simple copy-paste shortcut. Feed a contract in, get a tidy paragraph out, move on. That assumption is both the appeal and the danger. These summaries are actually complex, structured outputs that require careful workflow design, validation steps, and a clear understanding of where AI judgment ends and human judgment must begin. If your team is adopting AI for contract review, compliance audits, or legal research, understanding what these tools actually do, and where they can fail you, is not optional.

Table of Contents

- What is an AI-generated legal summary?

- How do AI-generated legal summaries actually work?

- Limitations and risks: accuracy, omissions, and real-world edge cases

- Evaluating and validating AI-generated legal summaries

- Integrating AI legal summaries into responsible workflows

- Why true legal defensibility means treating AI summaries as decision-support, not gospel

- Source-linked AI tools for reliable legal summaries

- Frequently asked questions

Key Takeaways

| Point | Details |

|---|---|

| Factual, structured summaries | AI-generated legal summaries condense key points and obligations into a structured, readable format. |

| Workflow integration | Human verification and clear source links are critical for trustworthy application in legal teams. |

| Benchmark and validate | Regular evaluation with advanced metrics helps ensure quality and defensibility. |

| Beware common risks | Omissions, hallucinations, or loss of sensitive details mean AI summaries must be used with caution. |

| Decision-support, not replacement | Treat AI summaries as a support tool, not a substitute for expert legal review. |

What is an AI-generated legal summary?

AI-generated legal summaries are structured condensations of legal source text, such as contracts, filings, and judgments, produced by AI systems to capture key facts, obligations, risks, and deadlines. They are not paraphrases of the full document. They are targeted extractions, organized for downstream legal work like negotiation, compliance review, or due diligence.

The most common use cases for legal teams include:

- Contract review: Extracting party obligations, payment terms, termination triggers, and liability caps from commercial agreements

- Compliance audits: Flagging statutory obligations or regulatory requirements within internal policies

- Legal research: Condensing case law, statutes, and secondary sources into actionable summaries for brief preparation

- Due diligence: Summarizing risk posture from acquisition target documents in a fraction of the manual time

A core feature that separates a useful AI-generated legal summary from a generic text summary is traceability. Each extracted element should map back to a specific clause or section in the source document. Without that link, you cannot verify the output, which makes it legally unsafe to rely on.

| Source document | Extracted elements | Typical output |

|---|---|---|

| Commercial lease | Term dates, rent escalations, break clauses, tenant obligations | Structured summary with clause references |

| Software license | IP ownership, restrictions, indemnification caps | Risk-flagged terms sheet |

| Regulatory filing | Disclosure deadlines, obligations, penalty clauses | Compliance checklist |

| M&A agreement | Representations, conditions precedent, MAC definitions | Due diligence summary |

Compared to manual summarization, AI summaries can process large document sets far faster and with consistent formatting. But manual review still catches contextual nuance, jurisdiction-specific interpretation, and strategic risk that AI systems frequently miss. Achieving smarter legal compliance requires pairing AI speed with legal expertise, not replacing one with the other. For teams that also need to draft contracts with AI, the same structured, source-linked principles apply.

How do AI-generated legal summaries actually work?

The mechanics behind these summaries matter enormously for legal teams that need reliable outputs. AI summarization commonly uses information extraction combined with structured output generation, rather than just free-form text summarization. This distinction is critical in regulated domains.

At the most fundamental level, the process works in three stages:

- Extraction: The AI identifies legally relevant passages, such as defined terms, obligations, deadlines, and penalty clauses, from the raw document text using pattern recognition, trained classifiers, or large language models (LLMs).

- Structuring: Extracted elements are organized into a consistent schema, like a terms sheet or compliance checklist, so outputs are comparable across documents and easy for reviewers to navigate.

- Synthesis: The system generates plain-language descriptions of each element, condensing clause language into actionable language without (ideally) losing material meaning.

Many modern systems use hybrid pipelines, combining extractive approaches (which select actual sentences from the source) with abstractive approaches (which rephrase or condense content). Extractive steps improve factual accuracy because the output is grounded in real text. Abstractive steps improve readability and allow for synthesis across multiple clauses.

| Feature | Structured AI summary | Generic free-form summary |

|---|---|---|

| Source traceability | Clause-level references | None or minimal |

| Output consistency | Standardized schema | Varies by query |

| Downstream usability | Directly feeds review workflows | Requires reformatting |

| Risk flagging | Built-in category tagging | Manual interpretation needed |

| Verification ease | High | Low |

Improving legal research efficiency depends significantly on choosing systems that produce structured, verifiable outputs rather than fluent but untraceable prose. The same logic applies to responsible contract drafting, where source linkage is the first line of defense against errors.

Pro Tip: In regulated domains, always prioritize AI summarization systems that produce structured, source-linked outputs over those that generate polished but unverifiable free-form text. Fluency is not a substitute for accuracy.

Human-in-the-loop checkpoints are not a best-effort add-on. They are the mechanism that makes the entire workflow defensible. At minimum, a qualified reviewer should validate extracted obligations and flag edge cases before any summary is used to support a legal decision.

Limitations and risks: accuracy, omissions, and real-world edge cases

Legal summarization must preserve factual and authority-critical details; hallucinations and omissions represent a major failure mode in current systems. A hallucination in a legal context is not just an interesting quirk. It can mean a fabricated citation, a misquoted obligation, or a non-existent clause that gets used as the basis for a contract position or compliance decision.

“The challenge is not just that AI can generate incorrect information. It is that incorrect information can look indistinguishable from correct information, formatted in the same structure, with the same confident tone.”

Common categories of failure include:

- Hallucinated clauses: The AI invents terms that don’t exist in the source document, particularly under pressure to produce complete summaries from sparse input

- Omitted obligations: Secondary or conditional obligations buried in subclauses are frequently missed, especially in long, complex agreements

- Statutory misapplication: AI systems often apply general legal rules where jurisdiction-specific rules govern, leading to incorrect compliance flags

- Multi-value term confusion: When a single defined term carries different meanings in different parts of a document, AI may flatten those distinctions

- Precedent misattribution: In case law summaries, holding and dicta can be conflated, and outdated precedent can be cited as controlling authority

Overreliance on AI summaries can cause confidentiality and value-dilution issues, particularly when strategic or IP-sensitive terms are compressed out of the final output. A summary that omits the nuances of an exclusivity clause or a non-compete carveout can cause significant downstream harm when acted upon without review.

Following AI legal risk best practices means building verification steps that specifically target these failure modes, not just checking that the summary reads well.

Pro Tip: Always cross-reference AI-generated summaries with the source document for any obligation, deadline, or risk factor that will be relied on in a negotiation, filing, or compliance decision. Never treat a clean-looking summary as confirmed.

Evaluating and validating AI-generated legal summaries

Knowing that AI summaries can fail is one thing. Knowing how to measure and improve summary quality is what separates teams that use AI responsibly from those that get burned by it.

Modern evaluation approaches go well beyond surface metrics, incorporating checklist-based scoring, reference-based evaluation frameworks, and LLM-judge methods that assess factual alignment, completeness, and legal accuracy against gold-standard summaries. ROUGE scores, which measure text overlap between a generated summary and a reference, remain widely used but are poorly suited to legal content where a single missing clause can be more important than overall text similarity.

Empirical results are encouraging but not a reason to reduce oversight. On some legal research tasks, AI now outperforms average lawyer baselines in accuracy benchmarks. That is a significant result, but it comes with an important caveat: benchmark tasks are structured and controlled. Real legal work involves ambiguity, strategy, and judgment that no benchmark fully captures.

| Evaluation method | Strength | Limitation |

|---|---|---|

| ROUGE scoring | Easy to automate | Poor fit for legal completeness |

| Checklist-based review | Captures required elements | Requires pre-built legal checklist |

| LLM-judge evaluation | Scalable, nuanced | Can inherit model biases |

| Human expert review | Highest accuracy | Time-intensive, not scalable alone |

Here is a practical validation workflow for legal teams:

- Define required elements before running the summary, such as parties, key dates, core obligations, penalty triggers, and governing law.

- Run the AI summary and compare each required element against the source document clause by clause.

- Score completeness by checking whether every defined element was captured and correctly described.

- Flag discrepancies for human review, particularly around conditional obligations and jurisdiction-specific terms.

- Document the validation in your review trail so the quality check is auditable and defensible.

Traceability is the foundation of this validation process. Without source citations in the summary output, steps two and three become labor-intensive guesswork rather than efficient spot-checking. Teams supporting law students through to senior partners benefit from the same structured validation model, scaled appropriately to the stakes of each task. For teams evaluating platform costs alongside capabilities, reviewing pricing options alongside validation requirements is a practical starting point.

Integrating AI legal summaries into responsible workflows

Having good tools and good evaluation methods still isn’t enough without a clear workflow that defines who uses AI summaries, when, and with what oversight. The clearest failure pattern in legal AI adoption is not a bad tool. It is an undefined process.

Teams should treat AI summaries as decision-support, with structured extraction, constrained prompts, and human verification checkpoints built into every stage. That framing changes how you design the workflow from the start.

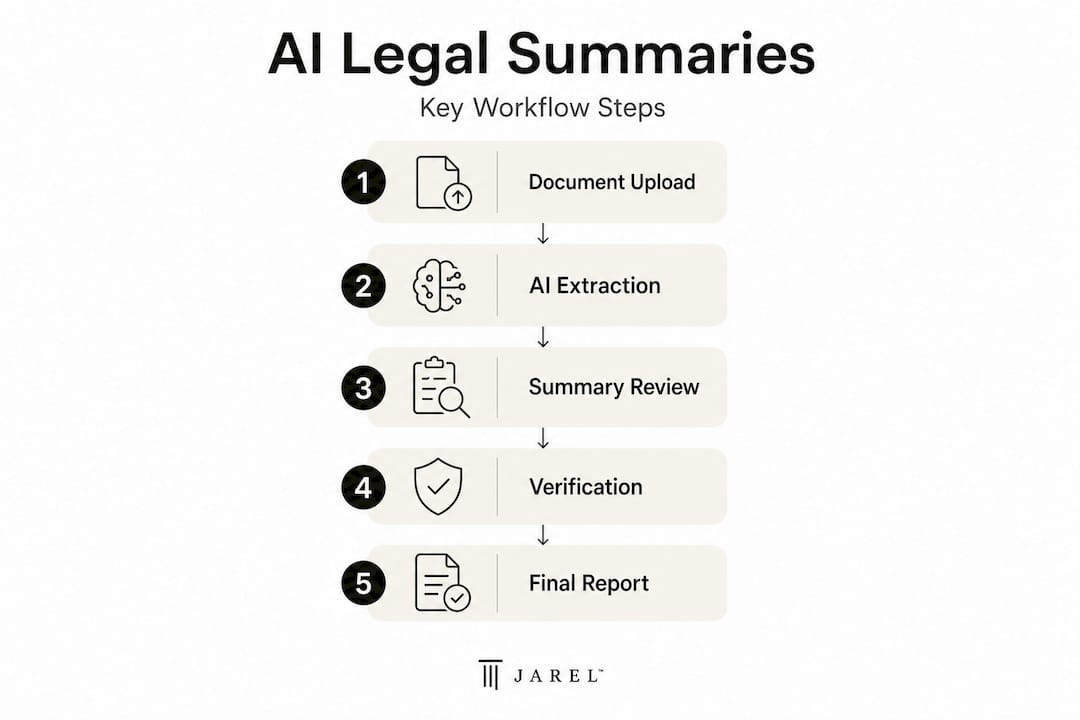

A defensible AI summary workflow looks like this:

- Input scoping: Define which documents go into the AI system, what questions you are asking it to answer, and what output format is required. Garbage in, garbage out is especially true in legal contexts.

- Structured extraction: Use a system configured to extract specific clause categories rather than generating open-ended summaries. Constrain the output schema.

- Source linkage: Ensure every extracted element in the summary carries a citation or reference to the originating clause. This is non-negotiable for defensible outputs.

- Human verification checkpoint: A qualified reviewer checks the summary against the source document for all high-stakes elements. This step cannot be automated away.

- Final use and documentation: The reviewed summary enters the workflow as decision-support. Document who reviewed it, when, and what was changed. That audit trail matters if the summary’s conclusions are ever challenged.

This model works across contexts. In compliance, it means the AI flags regulatory obligations and a compliance officer verifies interpretation. In contract review, it means the AI extracts commercial terms and a lawyer confirms risk posture before negotiation.

Pro Tip: For enterprise or regulated environments, always insist on enterprise-grade legal AI tools that produce source-linked, traceable outputs with built-in audit logs. Platforms that cannot show you where each summary element came from should not be part of a defensible legal workflow.

Why true legal defensibility means treating AI summaries as decision-support, not gospel

Here is the uncomfortable truth that most AI vendor narratives avoid: the biggest risk in legal AI right now is not a catastrophically wrong output. It is a confidently wrong output that looks right. Legal professionals are trained to spot ambiguity in document language. They are not yet uniformly trained to spot ambiguity in AI outputs, which often arrive formatted, structured, and fluent, even when they are factually unreliable.

The “black box” trust problem is real and specific to legal work. When an AI summary omits a governing-law clause or mischaracterizes a liability cap, the error may not surface until the moment it matters most, in litigation, regulatory review, or a deal gone wrong. Source-linked tools fundamentally change this dynamic because they force transparency. Every claim in the output points to a specific source. Reviewers can verify, challenge, or override. Generic chatbot-style summaries provide no such accountability mechanism.

Real-world errors are documented and instructive. Cases where AI systems applied the wrong state’s legal standard to a contract dispute, or cited superseded regulatory guidance as current, illustrate that even state-of-the-art systems carry jurisdiction and recency blind spots. These are not edge cases you can ignore because your documents are “standard.” Complex commercial agreements are rarely standard where it counts.

The benchmark data showing AI outperforming lawyers on some tasks is not a reason to reduce oversight. It is a reason to recalibrate how you use AI. Use it on high-volume, pattern-based extraction tasks where its consistency advantage is real. Keep lawyer judgment in the loop for contextual interpretation, strategic risk assessment, and any output that will be relied on in a dispute. Pursuing responsible AI adoption means building that distinction into your process deliberately, not leaving it to individual discretion.

Source-linked AI tools for reliable legal summaries

If this guide has made one thing clear, it is that the quality of your AI summarization workflow depends heavily on the quality and architecture of the tools you choose. Not all platforms are built with legal traceability in mind.

Jarel is built specifically for legal teams that need source-linked, auditable AI outputs. Every summary generated within the platform traces back to the originating clause or source document, making verification fast and defensible rather than a manual exercise. Whether you need legal AI directly in your inbox, dedicated legal research with AI that connects findings to authoritative sources, or a unified workspace for contract review and compliance, Jarel’s architecture keeps human oversight at the center. Teams that are serious about responsible AI adoption should explore Jarel solutions and see how source-linked transparency changes the way legal work gets done.

Frequently asked questions

What is included in an AI-generated legal summary?

A typical summary captures obligations, key facts, risks, and deadlines from contracts or filings in plain language, often with traceable links back to the source document text. The best outputs also include clause references so reviewers can verify each element quickly.

How accurate are AI-generated legal summaries compared to human lawyers?

On select tasks, AI now outperforms lawyer baselines in accuracy benchmarks, but performance varies significantly by use case, document type, and jurisdiction. Definitive human review remains essential for any output used in a legal decision.

What are the main risks of AI-generated legal summaries?

Omissions, hallucinations, and loss of confidential or critical details are the primary risks; legal summarization errors can include misrepresented obligations and fabricated citations that look structurally correct. Always verify summaries against original source documents before relying on them.

How should legal teams use AI summaries in their workflow?

Use them for decision-support rather than definitive legal conclusions, and always build human verification checkpoints into the review process before any summary output is acted upon.

Do AI-generated legal summaries protect confidentiality?

Summaries can significantly reduce review time, but overreliance on condensed outputs risks stripping away confidential or strategically sensitive detail unless a qualified reviewer validates the final output before it circulates.