How to use case law effectively in legal research

TL;DR:

- Case law anchors legal arguments by transforming statutory principles into concrete, enforceable standards through detailed analysis.

- AI tools in legal research must trace, validate, and link case law to ensure trustworthy, jurisdictionally accurate, and defensible conclusions.

Case law is not just a citation formality. It is the interpretive backbone of any legal argument, compliance position, or risk assessment your team produces. Yet many lawyers, including experienced ones, treat it as supplementary material rather than the primary lens through which legal principles become real and enforceable. That blind spot creates risk. When case law is misapplied, outdated, or pulled without validation, the consequences ripple through briefs, contracts, and compliance determinations in ways that are hard to reverse. This article walks you through the full picture: how case law fits into structured legal research, how to extract and validate it correctly, and what changes when AI enters the workflow.

Table of Contents

- Where case law fits in modern legal research

- How to extract and validate case law for research impact

- Case law as foundation for AI-driven research and compliance

- AI challenges: Distinguishing legal significance and jurisdiction

- Benchmarking AI and human reliability in case law research

- What most legal teams overlook when integrating case law with AI

- Take your case law research workflow to the next level

- Frequently asked questions

Key Takeaways

| Point | Details |

|---|---|

| Case law’s research foundation | Case law offers real-world interpretation and judicial reasoning that anchor legal research beyond statutes alone. |

| Extraction and validation | Reliable research depends on extracting the right case elements and thoroughly validating their current authority. |

| AI’s dual role | AI elevates case law as a verifiable substrate, but demands transparency and structured workflows for compliance. |

| Jurisdictional challenges | Distinguishing binding from persuasive precedent and correct jurisdictional application is critical—especially with AI. |

| Empirical benchmarking | Benchmarks reveal both the strengths and limits of AI versus human case law research, guiding best practice adoption. |

Where case law fits in modern legal research

Before you can use case law strategically, you need to understand where it actually belongs in the research hierarchy. A well-structured legal research workflow follows a clear sequence: first, issue analysis to define the legal question precisely; second, secondary sources like treatises, law review articles, and practice guides to build conceptual grounding; and third, primary sources, including statutes and case law, to anchor your conclusions in binding authority.

Legal research efficiency depends heavily on respecting this sequence. Skipping to case law too early, before you have framed the issue tightly, is one of the most common errors junior lawyers make. You end up reading cases that are tangentially related rather than directly on point, and that wastes hours.

As courts interpret legal principles in specific factual situations, case law provides something statutes and regulations simply cannot: real-world context. A statute might prohibit “unreasonable” conduct, but it is the case law that tells you what a court has actually found to be unreasonable in circumstances similar to yours.

“Case law is not decorative. It is the mechanism through which abstract legal text becomes a living, applicable standard.”

When reading a court opinion, your goal is never just to extract the outcome. You need to dissect five components systematically:

- Procedural history: How did this case arrive at this court?

- Facts: Which facts did the court consider legally significant?

- Issue: What precise legal question did the court resolve?

- Holding: What did the court decide, and how narrowly or broadly?

- Reasoning: What analytical path did the court follow to get there?

This structure matters because the holding is what binds. The reasoning illuminates how broadly or narrowly that holding should be read. Getting these confused leads to over-reliance on persuasive commentary rather than actual precedent.

| Research stage | Output | Role of case law |

|---|---|---|

| Issue analysis | Defined legal question | Not yet applicable |

| Secondary sources | Conceptual framework | Illustrative references |

| Primary sources | Binding authority | Decisive and foundational |

The legal intake process benefits directly from this structured approach. When intake identifies the legal issue precisely, subsequent case law research becomes targeted rather than exploratory, saving time and improving reliability.

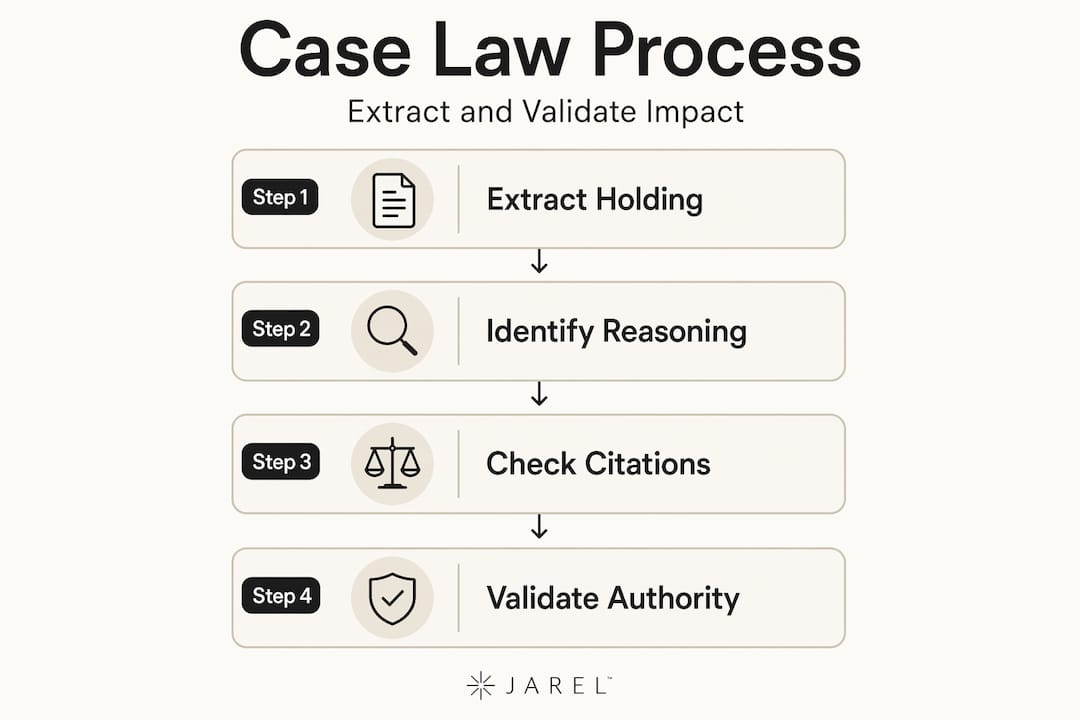

How to extract and validate case law for research impact

Finding a case is only half the work. Extracting the right elements from it and confirming it is still good law is what separates defensible research from risky guesswork.

The case law methodology for junior lawyers recommended by Stanford Law treats the work as a two-phase process: extraction first, validation second. Each phase has distinct tasks and tools.

Extraction means identifying exactly what you need from the opinion: the holding, the reasoning, and any specific factual conditions that the court relied on. Do not copy the headline rule. Dig into whether the holding was unanimous, whether a concurrence narrowed it, or whether a dissent signals an evolving doctrinal tension. Also critically, understand the difference between ratio decidendi and obiter dictum. The ratio is the legally binding principle. The dictum is commentary, possibly persuasive but not binding. Conflating them is a common mistake that can undermine a legal argument entirely.

Validation means confirming that the case has not been overruled, reversed, or negatively treated by a subsequent court. Using citators, such as those built into major legal research platforms, is the standard method. But validation goes further than checking a simple flag. You should understand locating and updating case law as a continuous habit, not a one-time check.

Here is a practical workflow for both phases:

- Identify the legally relevant issue from your case facts.

- Locate cases that address the same or analogous issue, prioritizing binding authority in the applicable jurisdiction.

- Extract the holding, distinguishing ratio from dictum.

- Note any concurrences or dissents that complicate application.

- Run each case through a citator to confirm current validity.

- Check for any subsequent decisions that limit, distinguish, or expand the holding.

- Document your validation steps and source links for the file record.

Pro Tip: When you find a highly relevant case, work backwards through its citations as well as forwards through cases that cite it. This two-direction approach surfaces the doctrinal history and reveals how courts have applied or constrained the principle over time.

| Task | Tool type | What to look for |

|---|---|---|

| Extraction | Case law database | Holding, ratio, reasoning |

| Validation | Citator | Negative treatment, subsequent history |

| Jurisdictional fit | Research guide | Applicable court hierarchy |

Effective legal document management depends on keeping these extraction and validation steps documented and linked. Especially in team environments, where multiple lawyers may reference the same cases, having a shared record of which cases are validated and which remain under review prevents duplication of effort and avoids reliance on unchecked authority.

For contract and drafting work, connecting validated case law to the specific clause you are drafting is good practice. Understanding how courts have interpreted similar language in AI contract drafting contexts illustrates exactly why this matters, especially as new clause language enters practice without extensive judicial interpretation yet.

Case law as foundation for AI-driven research and compliance

When AI enters the legal research workflow, the role of case law shifts. It moves from being a search result you manually review to being a structured input and verification substrate that AI systems must handle reliably.

The transformation is significant. AI-driven legal research treats case law not merely as retrieved documents but as a verification layer. For an AI output to be usable in compliance or litigation contexts, the underlying case law it draws on must be traceable, source-linked, and auditable. If you cannot identify which case supported which conclusion, the AI output is legally inert.

This has direct operational consequences for how you build workflows:

- Every AI-generated legal analysis should link back to specific cases with full citations.

- The system should flag when a case cited falls outside the applicable jurisdiction.

- Audit trails must document what source material was available when the AI generated the analysis.

- Human review should confirm that the cases relied on were correctly characterized.

- Version control matters: if a case is overruled after the AI analysis was generated, that should be detectable in the record.

“An AI tool that retrieves cases without tracing their authority or documenting its reasoning is not a research tool. It is a search engine with extra steps.”

The accountability question is real. In responsible AI legal use, the professional responsibility obligation rests with the lawyer, not the tool. That means the workflow must be built so that traceability is native to the process, not retrofitted as an afterthought.

Pro Tip: Before deploying any AI research tool in a client-facing context, audit its output format. Ask: does it cite specific cases with links? Does it distinguish holdings from dicta? Does it indicate jurisdiction? If the answer to any of these is no, your validation burden increases substantially.

AI challenges: Distinguishing legal significance and jurisdiction

AI tools face specific, well-documented limitations in legal research contexts. Two of the most consequential are the failure to consistently distinguish ratio from dictum, and the failure to apply jurisdictionally correct frameworks.

The doctrinal hierarchy challenge is a real limitation: AI models that retrieve or summarize case law still need systems that can distinguish legally significant distinctions and select the correct legal framework by jurisdiction, otherwise outputs may be unreliable in high-stakes tasks.

Here is what this looks like in practice:

- An AI tool might quote extensively from a judge’s reasoning, treating persuasive dictum as if it were the binding holding.

- A tool calibrated on US federal case law may apply federal doctrines when a state-specific framework governs the issue.

- When two courts in different jurisdictions have reached contrary holdings, an AI tool may not flag the conflict, presenting one holding as though it were universal.

| Challenge | Risk to legal work | Required mitigation |

|---|---|---|

| Ratio vs. dictum confusion | Relying on non-binding commentary as precedent | Manual review of extracted holdings |

| Jurisdictional mismatch | Applying wrong legal framework | Jurisdiction-locking before AI research |

| Conflicting authority | Missing intra-circuit splits | Human review of authority weight |

For in-house AI compliance management teams, jurisdictional accuracy is especially critical. Regulatory requirements vary by jurisdiction in ways that are not always intuitive, and a compliance position grounded in the wrong court’s interpretation of a statute can expose the company to significant liability.

A 2024 survey found that over 60% of in-house legal teams reported concern about AI tools producing jurisdictionally misaligned legal summaries. That figure underscores that this is not a theoretical problem. It is a workflow design problem that requires deliberate human checkpoints.

Benchmarking AI and human reliability in case law research

How do you actually measure whether AI is performing reliably in case law-dependent tasks? Empirical benchmarks are increasingly the answer.

The human vs. AI legal research comparison framework evaluates AI outputs against three dimensions: accuracy (is the law correctly stated?), authoritativeness (are the cited sources binding and appropriate?), and appropriateness (is the analysis relevant to the specific issue at hand?). Human legal researchers are scored against the same dimensions to create a meaningful baseline.

The findings are instructive. AI tools can match or even exceed human performance on authoritativeness, particularly when the task involves retrieving well-established precedents in a narrowly defined area. Where AI tends to fall short is in nuanced, multijurisdictional questions, novel legal issues with limited precedent, and fact-intensive analyses that require weighing case law against a specific client’s circumstances.

“A benchmark is not just a score. It tells you where you can trust AI output and where you must slow down and apply expert judgment.”

For practical implementation, consider a three-step approach when evaluating any AI research tool for your team:

- Test against known cases. Run the tool against research questions where you already know the correct answer. Evaluate accuracy, citation quality, and whether the tool correctly identified binding versus persuasive authority.

- Stress-test jurisdictional precision. Ask the tool questions involving state-specific or cross-border regulatory issues. Confirm that the cases it surfaces are from the correct jurisdiction and court level.

- Assess explainability. Can the tool show you which specific passage of the case supports its conclusion? If not, your ability to verify its output is limited.

These steps connect directly to research efficiency strategies that high-performing legal teams use. AI that is properly benchmarked and integrated with human checkpoints accelerates output quality rather than simply accelerating volume.

What most legal teams overlook when integrating case law with AI

Here is the honest observation: most legal teams pursuing AI adoption are optimizing for speed and coverage. They want to know how many documents the tool can review, how quickly it can surface relevant cases, and whether it reduces research hours. Those are reasonable objectives. But they are not the right primary question.

The right question is whether the output is traceable and defensible. Speed without traceability creates false confidence. A fast, well-organized research memo that draws on unvalidated or incorrectly characterized case law does not save time. It defers the problem to a more expensive moment, typically in court, in a regulatory audit, or in a deal that has already closed.

The operationalizing compliance with AI perspective from White & Case’s 2025 global compliance benchmarking survey is clear: in-house teams that achieve the best outcomes from AI tools are those that build case law integration into auditable, decision-ready workflows rather than treating it as an information retrieval upgrade.

What that looks like in practice is a workflow where every AI-generated legal conclusion is linked to a specific cited source, every source is validated before the output is finalized, and every step is logged in a reviewable record. This is not bureaucratic overhead. This is what allows the work to withstand scrutiny.

The teams that will get the most from AI in legal research are those that treat governance and best practices as a workflow design problem, not a compliance checkbox. Build the traceability in from the start. Audit regularly. Require that AI outputs cite the case, the court, the jurisdiction, and the specific holding. That is the foundation of trustworthy AI-assisted legal work.

Take your case law research workflow to the next level

If your team is serious about making case law integration a strength rather than a liability, the architecture of your research tools matters as much as the quality of your lawyers.

Jarel is built specifically for legal teams that need AI-powered research with source-linked outputs, audit trails, and human oversight baked in at every step. Whether you are conducting AI-powered legal research for litigation support or compliance mapping, or managing contracts and regulatory documents directly through your inbox via the Outlook legal AI add-in, the platform keeps every conclusion connected to the authority behind it. For AI for legal teams managing high volumes of research and review work, Jarel provides the traceable, verifiable environment that modern legal work demands.

Frequently asked questions

How does case law differ from statutes in legal research?

Case law interprets legal principles in specific factual situations, adding nuance and precedent that statutory text alone cannot provide. Statutes define the rule; case law shows how courts apply it when real-world facts are messy and contested.

What role does validation play in using case law?

Validation is what confirms the case is still good law, ensuring you are not building an argument on a holding that has been overruled or undermined. Junior lawyers must validate every case they intend to cite, using citators to check for negative subsequent treatment.

Can AI tools reliably distinguish between ratio decidendi and dicta?

Many current AI tools struggle to make this distinction with consistency because they process text rather than analyze doctrinal hierarchy. AI systems may mishandle legal distinctions unless they are specifically designed and trained to do so, making human review of extracted holdings essential.

Are AI-driven legal research tools more authoritative than human researchers?

It depends on the task. Legal AI can outperform human researchers in authoritativeness on well-established, narrowly defined questions, but typically lags when the issue involves jurisdictional complexity, conflicting authority, or novel factual circumstances requiring expert judgment.